Upcoming Events

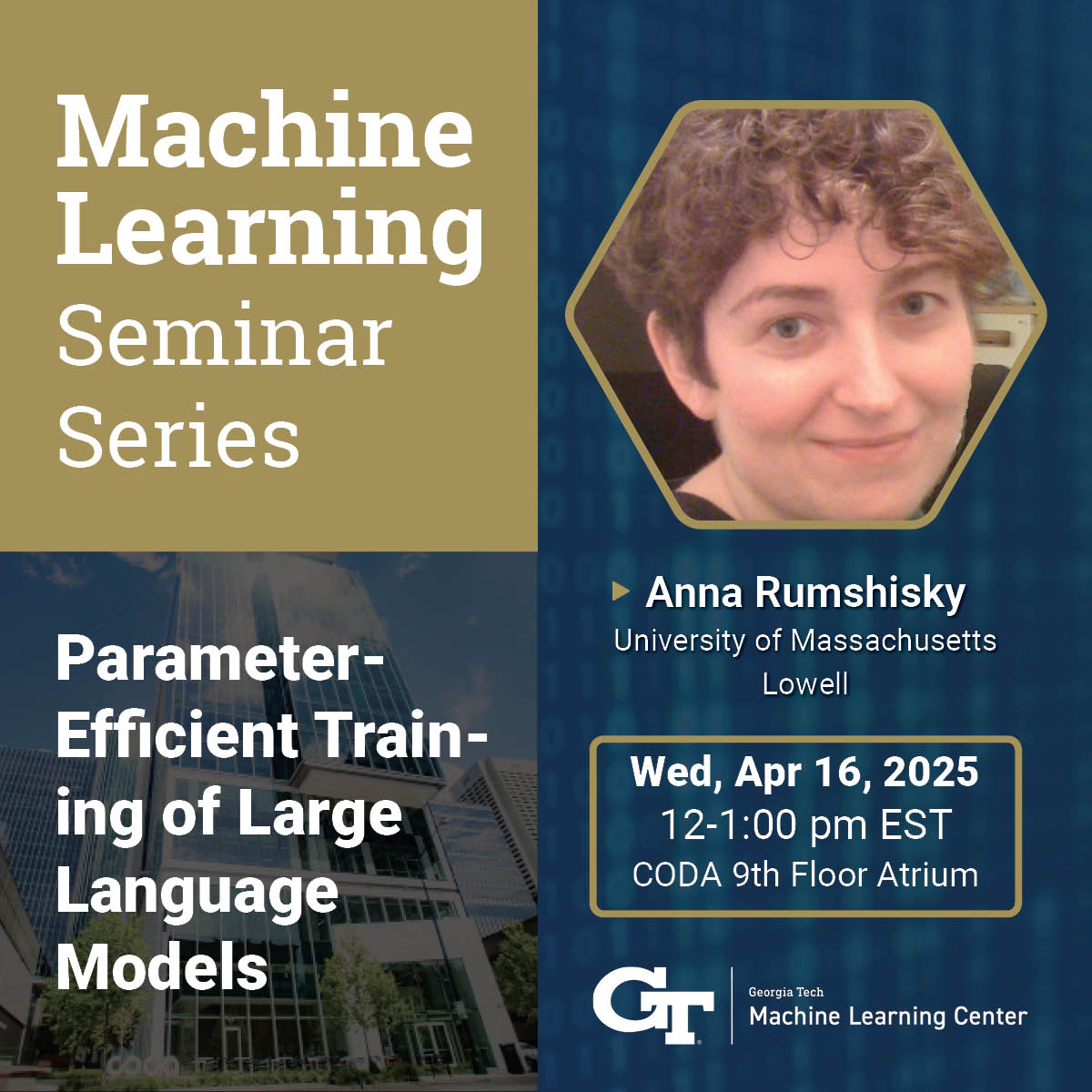

ML@GT Seminar Series | Parameter-Efficient Training of Large Language Models

Featuring Anna Rumshisky, University of Massachusetts Lowell

Abstract: Over the past few years, scaling large language models (LLMs) has become the go-to AI solution for solving progressively more complicated tasks. However, as the models scaled towards hundreds of billions of parameters, not just training them from scratch, but even fine-tuning to adapt to specific tasks has become computationally expensive and unmanageable for most practitioners. This has led to a crisis, in which only a few well-funded industry labs have the resources to develop high-quality LLMs. In response to these developments, numerous parameter-efficient techniques for fine-tuning large language models have been developed, revolutionizing the accessibility of fine-tuning LLMs. However, until recently, such methods were not available for pre-training.

In this talk, I will present our recent work on ReLoRA, the first parameter-efficient method for training models from scratch, which utilizes low-rank updates to train high-rank networks, with demonstrated results for models with up to 1.3 billion parameters. I will also include a brief overview and taxonomy of different parameter-efficient training (PET) methods that have been developed over the past two years. I will discuss the advantages and limitations of different approaches to PET with respect to parameter and memory efficiency, as well as training speed and inference throughput.

Bio: Anna Rumshisky is an Associate Professor of Computer Science at the University of Massachusetts Lowell, where she leads the Text Machine Lab for NLP. Her primary research area is artificial intelligence and large language model (LLM) research, with a focus on efficient large model training and model analysis and interpretability. She holds a joint appointment as an Amazon Scholar at Amazon AGIF (Artificial General Intelligence Foundations), where she helps drive the scientific efforts behind large-scale foundational LLM training in an industry setting. She was previously a postdoctoral fellow at MIT CSAIL and a PhD in Computer Science from Brandeis University. She is a recipient of the NSF CAREER award in 2017 and the best thematic paper award at NAACL-HLT 2019. Her research has been funded by the NSF, NIH, Army Research Office, among others.

Event Details

Media Contact

Shelli Hatcher, Program and Operations Manager

EVENTS BY SCHOOL & CENTER

School of Computational Science and Engineering

School of Interactive Computing

School of Cybersecurity and Privacy

Algorithms and Randomness Center (ARC)

Center for 21st Century Universities (C21U)

Center for Deliberate Innovation (CDI)

Center for Experimental Research in Computer Systems (CERCS)

Center for Research into Novel Computing Hierarchies (CRNCH)

Constellations Center for Equity in Computing

Institute for People and Technology (IPAT)

Institute for Robotics and Intelligent Machines (IRIM)